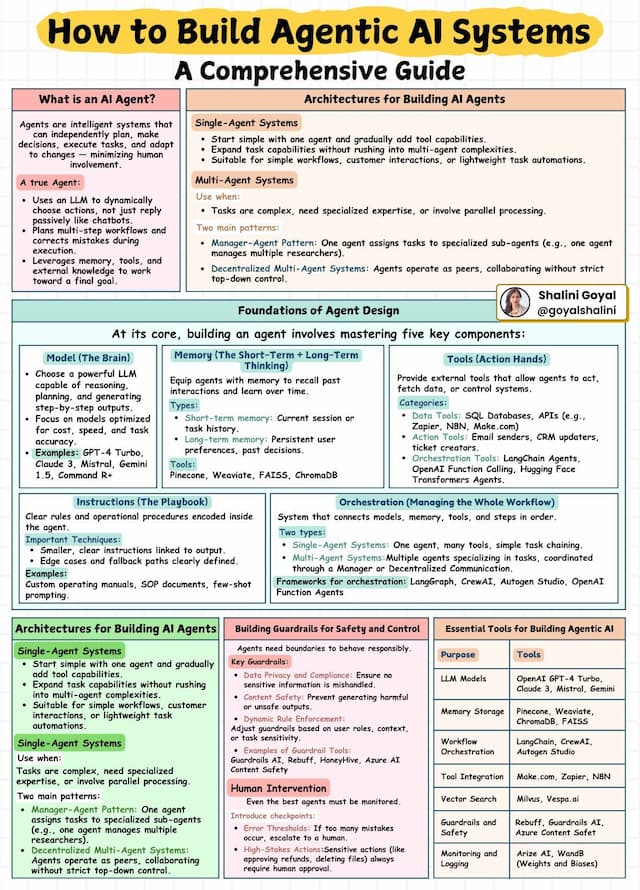

Why LangGraph Matters for Controlling LLM Hallucinations

Most teams try to fight LLM hallucinations by “using a bigger model” or “adding RAG on top,” but the results are often inconsistent and fragile. The core issue is not only model quality, but the lack of a reliable, stateful workflow around the model: there is no explicit place to validate, correct, or safely route uncertain answers. LangGraph addresses this by modeling LLM applications as stateful graphs, where retrieval, generation, grading, self-correction, and human review become first-class nodes in a controlled process. This article explains why LangGraph is well suited for reducing hallucinations in production systems and outlines concrete design patterns you can adopt immediately.

Dec 15, 2025